Orbitport: Gateway to Orbital Services

Satellites are complicated infrastructure. Orbital mechanics, ground station scheduling, communication protocols, data buffering, pass windows... none of this is something an application developer should have to think about. But if you're curious, head to the satellite communication concepts page.

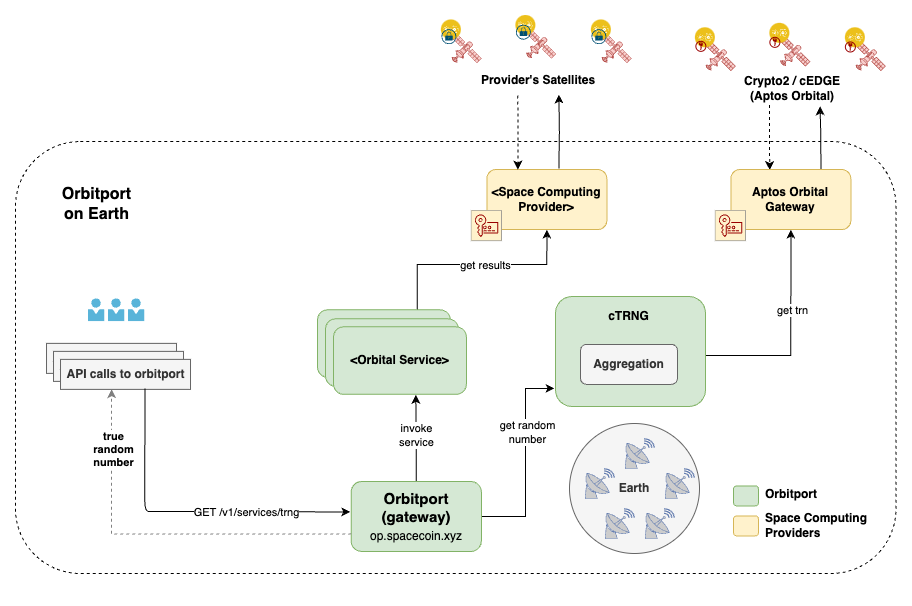

Orbitport is the abstraction layer that makes orbital services consumable through standard web APIs. One endpoint, one SDK, and the orbital complexity stays behind the curtain.

What Orbitport does

At its core, Orbitport is a gateway. It sits between your application and a growing constellation of satellite providers, handling:

- Data ingestion from satellites via ground stations

- Source management across multiple providers and hardware payloads

- Authentication and access control

- Automatic source selection and fallback when a given source is unavailable

- Public data distribution through the IPFS beacon

- Signature verification to ensure data integrity from satellite to consumer

You interact with Orbitport the way you'd interact with any REST API. The fact that the data originates from hardware moving at 7.5 km/s in low Earth orbit is, from your application's perspective, an implementation detail.

The plugin architecture

Orbitport is designed around a three-layer plugin architecture. This is an internal design choice, and allows the system to scale to new satellites, new providers, and new services without redesigning the core.

Celestial layer

This is the satellite side. Each satellite provider integrates as a plugin that speaks the provider's native protocol and translates data into Orbitport's internal format.

Currently, the celestial layer supports these payloads:

- cEDGE: cosmic radiation detection hardware that generates cTRNG values

- Crypto2: an additional payload with similar capabilities

As more satellite operators come online with orbital compute or sensing services, they integrate at this layer. The rest of the stack doesn't need to change.

Terrestrial layer

This layer handles ground station infrastructure and communication. It manages:

- Scheduling and prioritizing satellite passes

- Receiving and buffering data downloads

- Routing data from ground stations to Orbitport's processing pipeline

Different ground station networks can plug in here. Some providers operate their own ground stations, while others use shared networks. The terrestrial layer normalizes these differences.

Application layer

This is what you interact with. The application layer exposes orbital services through clean, documented APIs:

- cTRNG: cosmic True Random Number Generation, available now

- spaceTEE: Trusted Execution from orbit, on the roadmap

Each service has its own API surface, but they share common infrastructure for authentication, rate limiting, and source management.

What Orbitport abstracts away

Satellite infrastructure has properties that would be painful to deal with directly. Data arrives in batches during short pass windows, not as a continuous stream. Latency is variable — seconds if data was recently downloaded, or you might be pulling from a buffer that's minutes old. Availability depends on orbital mechanics: predictable gaps, but gaps nonetheless.

None of this is your problem. Orbitport's entire job is to make orbital services feel like calling a normal API. The source selection picks the freshest available data. The IPFS beacon publishes every 60 seconds regardless of satellite pass schedules, so there's always something recent. The SDK handles fallback automatically. Your code calls sdk.ctrng.random() and gets a value. Where it came from and how it got to the ground, all of that is handled behind the curtain.

One thing worth understanding: "freshness" means something different for randomness than for most data. A cTRNG value generated twenty minutes ago is exactly as random as one generated a second ago. Randomness doesn't expire. So even when satellite contact is intermittent, the data you get is no less useful. The only case where freshness matters is if you need to prove a value was generated after some event. And for that, the IPFS beacon's timestamped blocks have you covered.

If you want to understand the satellite communication model that creates these constraints, see How Satellites Communicate. But for integration purposes, you can treat Orbitport as a normal REST API.

Source selection and the fallback chain

One of Orbitport's most important jobs is deciding where your data comes from. Not all sources are equal, and not all sources are always available.

When you request cTRNG data, Orbitport evaluates available sources and picks the best one:

Primary: aptosorbital

Direct satellite data. Random bytes generated from cosmic radiation by satellite hardware, cryptographically signed onboard. This is the highest-assurance source: true cosmic randomness with full provenance.

Availability depends on satellite passes. If the satellite recently passed over a ground station, fresh data is in the buffer. If not, this source may be temporarily unavailable.

Secondary: derived

When fresh satellite data isn't in the buffer, Orbitport can derive additional random values using BIP32 hierarchical key derivation from a cosmic master seed. The master seed was generated in orbit from cosmic radiation, so derived values inherit its entropy properties.

This is a practical tradeoff. Derived values aren't independently generated cosmic random numbers, but they're cryptographically derived from one. For most applications, derived randomness is indistinguishable from direct satellite randomness in terms of quality. The difference matters mainly for auditability: you can't trace a derived value back to a specific cosmic ray event.

Public: IPFS beacon

The IPFS beacon operates on a fixed 60-second publishing cycle, independent of API requests. It's always available, requires no authentication, and provides a public, append-only record of cTRNG values.

The TypeScript SDK (@spacecomputer-io/orbitport-sdk-ts) implements this fallback chain automatically. You make one call, the SDK evaluates source availability, and returns the best data it can get. You can also request specific sources explicitly if your application requires it.

The IPFS beacon in detail

The beacon deserves its own section because it serves a dual purpose: it's both a fallback data source and a public transparency mechanism.

Every 60 seconds, Orbitport publishes a new block to IPFS with the following structure:

{

"previous": "/ipfs/bafkrei...",

"data": {

"sequence": 87963,

"timestamp": 1769179239,

"ctrng": [

"88943046891c6c971f185c7cd69a350d...",

"802a5afa3b09c360ec56cbe67cb615e0...",

"dbbe94501ed32c55acb4ad4512da0c38..."

]

}

}

Each block contains:

sequence-- a monotonically increasing block numbertimestamp-- Unix timestamp of block creationctrng-- an array of cTRNG values (currently 3 per block, but this may increase)previous-- an IPFS CID pointing to the prior block, forming a chain

The chain structure means anyone can traverse the full history of published randomness. This is important for applications that need to audit or verify randomness after the fact. Lottery results, random selection processes, anything where fairness needs to be provable.

Because the beacon uses IPNS for its stable address, consumers always fetch the latest block from the same URL. The IPFS content-addressing guarantees that once a block is published, it can't be retroactively modified without changing its CID (and breaking the chain).

No authentication is required to read the beacon. It's a public good.

Authentication

API access to Orbitport uses OAuth2 via Auth0. The flow is standard:

- You register and receive a client ID and client secret.

- Your server exchanges these credentials for an access token.

- You include the token in API requests as a Bearer token.

- Tokens expire and need to be refreshed.

The SDK handles token lifecycle automatically if you provide credentials. For server-side integrations, the recommended pattern is a proxy that manages tokens centrally and exposes a simpler interface to your client-side code.

The IPFS beacon, by contrast, requires no authentication at all.

API surface

The current API is focused on cTRNG:

GET /api/v1/services/trng

This returns cosmic random data from the best available source. You can specify source preferences and other parameters. Full details are in the SDK Quickstart.

As SpaceComputer adds services (starting with spaceTEE), new endpoints will appear under the same base URL, using the same authentication and the same SDK.

Current state and what's ahead

Today, Orbitport operates as a centralized gateway. All requests flow through SpaceComputer's infrastructure, which manages satellite data ingestion, source selection, and distribution. This is the pragmatic starting point: it works, it's simple to integrate with, and it lets the team iterate on the satellite infrastructure without breaking consumer APIs.

The roadmap moves toward decentralization:

More satellites. The plugin architecture means adding a new satellite provider is an integration task, not a redesign. Each new satellite increases the volume and freshness of available data.

More services. cTRNG is the first orbital service. SpaceTEE (trusted execution in orbit) is next. The same architectural layers - celestial, terrestrial, application - support both.

More providers. Orbitport is designed to be provider-agnostic. As the market for orbital compute services grows, multiple satellite operators can feed into the same gateway.

Distributed operation. The long-term vision is a permissioned network of Orbitport nodes, reducing single-point-of-failure risk and enabling geographic distribution of the gateway itself. The IPFS beacon is an early step in this direction. It's already decentralized in its distribution.

For developers integrating today, the key point is that the API surface is stable even as the backend evolves. Your integration code won't need to change as Orbitport adds satellites, providers, and services behind the same endpoints.